Your fiber network never lies. When performance falls short of what the spec sheet promised, the root cause almost always comes down to three measurable realities: Dispersion, loss, and return.

These factors decide whether a link delivers clean 10 Gbps throughput or struggles to hold 2.5. Ignore them, and even the most expensive infrastructure underperforms. Fiber characterization reveals how light actually moves through your cable. Experienced fiber technicians measure these parameters to expose hidden flaws, confirm design assumptions, and pinpoint exactly where performance breaks down. This work blends precision testing with real-world problem solving, and it sits at the center of every successful deployment, upgrade, or restoration.

What Is Fiber Characterization? (And Why It Matters)

Fiber characterization is the systematic testing of optical fiber to measure how it transmits light. Think of it as a health checkup for your network's arteries. Instead of blood pressure and cholesterol, you're measuring things like attenuation (signal loss), chromatic dispersion (pulse spreading), and return loss (reflected light).

Here's why it matters: fiber optic cables don't fail the way copper cables do. They degrade slowly, invisibly, and often in ways that don't trigger alarms until it's too late. A fiber link might look fine with basic testing but still cause packet loss, jitter, or intermittent outages because of hidden issues like macro-bends, splice defects, or dispersion.

Dispersion: When Light Spreads Out

Dispersion is what happens when a clean, sharp pulse of light starts to lose its shape as it travels through fiber. Think of shouting across a canyon. Your voice may start out crisp, but by the time it reaches the other side, echoes smear the sound and the words run together. Light behaves the same way in an optical fiber. As distance increases, dispersion causes light pulses to spread out and blur into one another, making it harder for the receiver to tell where one wave ends and the next begins.

One major contributor is chromatic dispersion. Light is not a single color but a narrow band of wavelengths, and each wavelength travels at a slightly different speed through glass. Over long distances, those tiny speed differences add up. The pulse stretches, much like cars entering a highway at different times.

Chromatic dispersion is measured in picoseconds per nanometer per kilometer. In standard single-mode fiber such as SMF-28, dispersion at 1550 nanometers is about 17 ps/nm·km. That number seems small until you multiply it by distance. Over an 80-kilometer span, dispersion accumulates quickly. At 10 gigabits per second, a system can tolerate roughly 1,000 picoseconds per nanometer before errors rise sharply. Push the data rate to 40 gigabits per second, and that tolerance collapses to around 60 picoseconds per nanometer.

Polarization mode dispersion adds another layer of complexity. Light in a fiber travels in two polarization states, similar to horizontal and vertical waves. If the fiber core is not perfectly symmetrical, which can happen due to manufacturing variations, bending, or mechanical stress, those two states move at different speeds. The result is a slight timing skew that spreads the pulse even further. Unlike chromatic dispersion, PMD is random and cumulative, and it is measured in picoseconds per square root kilometer. Modern network standards recommend PMD coefficients below 0.5 ps/√km. On a 100-kilometer link, that translates to a total PMD of about 5 picoseconds. It is a tight margin, but one that high-speed optical systems depend on to maintain clean, reliable transmission.

Where Signal Loss Happens

Attenuation, often called insertion loss, is the gradual reduction in signal strength as light moves through splices and connectors. As the signal travels, a portion of the light energy is also lost to absorption within the glass, microscopic scattering, and small imperfections formed during manufacturing. This loss is unavoidable, but it must be carefully managed to keep a network stable and within its power budget.

Technicians measure attenuation in decibels per kilometer. In standard single-mode fiber such as SMF-28, loss at 1310 nanometers is typically around 0.35 dB per kilometer, while at 1550 nanometers it drops to about 0.20 dB per kilometer. That difference is why wavelength matters. Shorter wavelengths scatter more as they interact with the glass structure, while longer wavelengths travel more efficiently. This is also why long-haul and high-capacity networks favor 1550 nanometers. The signal simply goes farther with less power loss.

The fiber itself is only part of the story. Real-world attenuation adds up quickly once splices, connectors, and bends enter the equation. A poorly cleaned connector can introduce half a decibel of loss on its own. A tight bend in a cable can add another 0.3 dB without anyone noticing. When multiple small issues stack together, a link that looked fine on paper can burn through a 20 dB power budget faster than expected.

Return Testing: The Echo That Tells the Truth

Optical Return Loss, or ORL, measures how much light reflects back toward the transmitter instead of continuing down the fiber. Every time light encounters a change in refractive index, such as a connector interface, a splice point, a microscopic crack, or even a small air gap, a portion of that energy bounces backward. In a controlled system, reflections remain minimal. In a poorly installed or contaminated link, they quickly become a serious performance threat.

Technicians measure ORL in negative decibels. The more negative the value, the better the performance. A reading of negative 40 dB or lower indicates a strong link with minimal reflectance. Around negative 30 dB is generally acceptable for many systems. Once measurements approach negative 20 dB or higher, reflections become problematic and demand investigation.

Reflected light disrupts transmission because it interferes directly with the source laser. The effect resembles trying to speak clearly while someone echoes your own words back at full volume. In high-speed digital systems and especially in analog applications, that interference can distort signals, increase bit error rates, and destabilize the transmitter.

The stakes rise even higher in dense wavelength-division multiplexing (DWDM) systems. In DWDM environments, multiple wavelengths share the same fiber simultaneously. Even minor reflections can introduce crosstalk between channels, degrading overall system integrity. For 40G, 100G, and coherent optical systems, ORL testing is not optional. It is a fundamental validation step that ensures the link performs reliably under high data rates and tight optical tolerances.

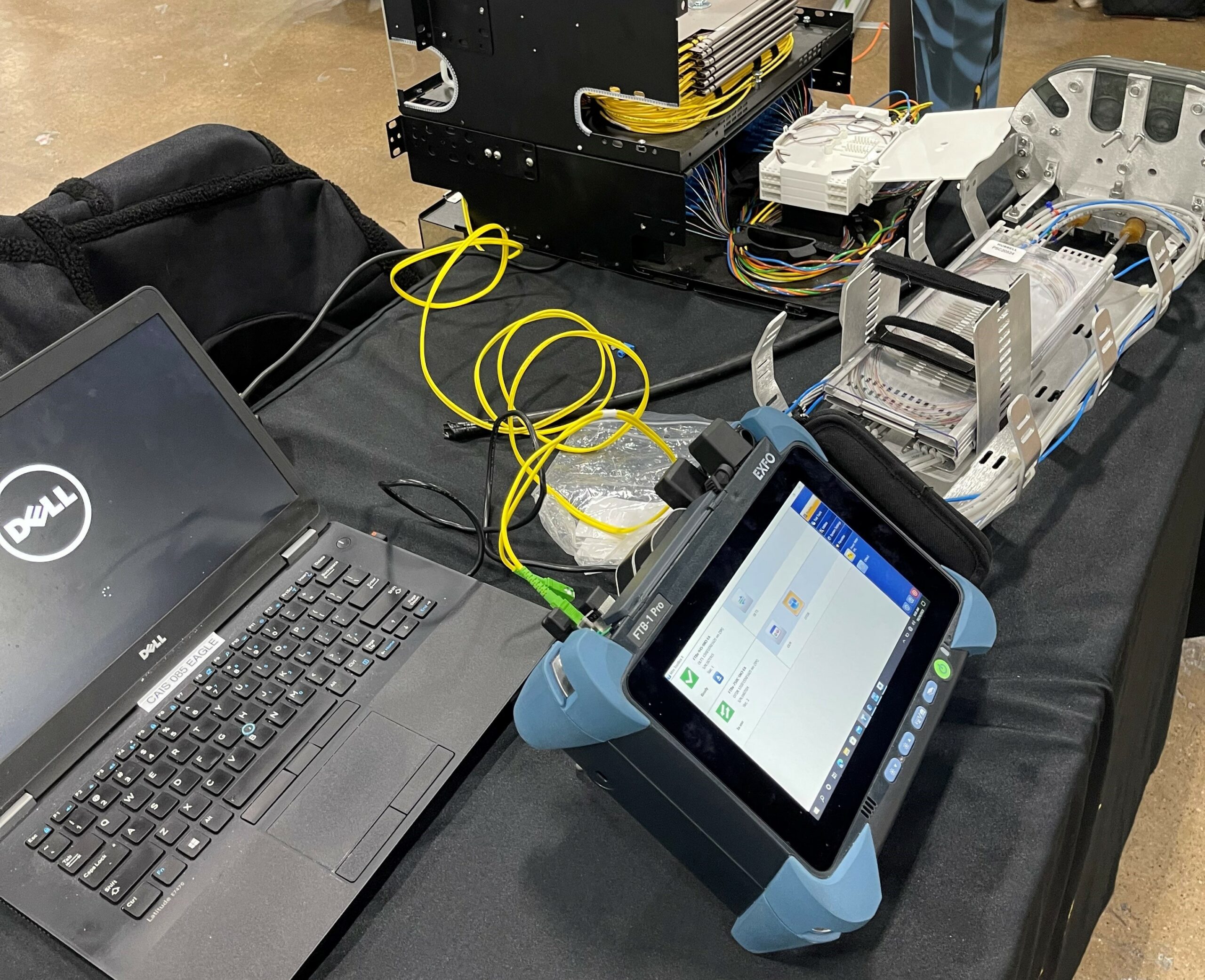

Choosing the Right Fiber Characterization Test Equipment

Not all fiber test equipment delivers the same level of insight. The right tools determine whether you diagnose a problem accurately the first time or chase symptoms for weeks. Serious network validation starts with instruments that measure performance comprehensively, not just superficially.

An Optical Time-Domain Reflectometer, or OTDR, remains the foundation of fiber testing. It sends controlled light pulses down the fiber and analyzes the reflections that return. From that data, it maps splice points, connector interfaces, bends, and breaks while quantifying loss at each event. When a link fails or underperforms, the OTDR provides the visual fingerprint that pinpoints exactly where performance degrades.

A chromatic dispersion analyzer becomes essential as data rates increase. It measures dispersion across multiple wavelengths and quantifies how pulse spreading will impact transmission over distance. Networks operating at 10 gigabits per second and above rely on accurate CD measurements, particularly at both 1310 nanometers and 1550 nanometers, where dispersion behavior differs significantly.

A PMD analyzer addresses polarization mode dispersion through interferometric measurement techniques. As speeds move into 40 gigabits and coherent optical systems, PMD margins tighten dramatically. Without precise PMD data, high-speed links can appear stable during commissioning but fail unpredictably under load.

An optical return loss meter completes the picture by measuring reflections along the link and at connector interfaces. Many advanced OTDR platforms integrate ORL testing, allowing technicians to validate both attenuation and reflectance in a single workflow.

For DWDM deployments or 100G systems, they offer a strategic advantage. It evaluates attenuation, dispersion, PMD, and return loss in one coordinated pass. This approach accelerates commissioning, improves measurement consistency, and reduces the risk of overlooking subtle impairments that only surface at scale.